ChatGPT needs no introduction unless you’ve been living under a rock. The next-gen chatbot from OpenAI has put the digital world in a state of frenzy ever since it was launched on 30th November 2022.

The advanced chatbot is living up to its much-hyped revolutionary capabilities as people worldwide test its potential in various ways. It recently garnered much attention for passing Wharton’s MBA exam and a few other challenging exams in the USA. In fact, ChatGPT even aced the US medical licensing exam, which is regarded as one of the toughest in the world.

Not to be outdone, The Straits Times pitted the intelligent chatbot against PSLE. All Singaporeans are aware of the PSLE exam– the most notorious exam conducted for Primary 6 students. The result left everyone astonished– ChatGPT failed the PSLE exam! So, what really happened? Why did the world’s most famous and smartest chatbot fail to succeed in PSLE? Let’s unravel the details.

An Intro to PSLE Exam: Singapore’s Toughest Test

The Primary School Leaving Examination, more famously known as PSLE, is conducted every academic year by Singapore’s Ministry of Education. The exam is mandatory for all Primary 6 students studying in various schools nationwide. PSLE tests the students’ proficiency in four major subjects:

- Maths

- Science

- English

- Mother tongue (Chinese, Tamil, Malay)

Of the four, Math is considered the toughest subject since Singapore’s Math syllabus is renowned for its world standards. Students here are adept in the subject and regularly score international rankings. Hence, it should be no surprise that the PSLE Math exam can be packed with exceptionally tricky and difficult questions. In fact, the Math exam in 2021 was so tough that it is said to have left some students in tears.

Every academic year witnesses a buzz of activity when the Ministry announces the PSLE dates. From there on, every activity in Singapore revolves around the exam. Surprisingly, the exam fever impacts the parents as much as the Primary 6 students. PSLE becomes the centre of focus as parents sacrifice much of their time and effort in helping their students gear up for this academic challenge.

It should be noted that Primary 6 students begin their PSLE preparations months before their P6 academic year begins. This is an exam that requires dedicated hours of revision and study. So how did ChatGPT perform its first PSLE attempt without any such preparation?

ChatGPT Versus PSLE: An Account of What Happened

A collection of questions from PSLE Science, Math, and English tests conducted in 2020, 2021, and 2022 were fed into the chatbot, and these were the resulting scores:

- Mathematics-16/100

- Science-21/100

- English-11/20

Anyone would’ve expected ChatGPT to effortlessly ace PSLE. But apparently, the results are just the opposite.

Here are some of the questions that had ChatGPT stumped.

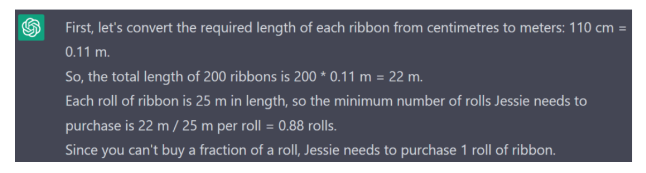

1. Jessie’s Ribbons

To decorate a room for a party, Jessie requires 200 pieces of ribbon, each of which must be 100 centimetres long. Suppose the local store sells ribbon rolls of only 25 meters long; how many rolls will Jessie require at a minimum?

Here is ChatGPT’s answer:

As you can see, ChatGPT’s AI makes a very basic error conversion: 110 cms= 1.1 meters. 0.11 meters is wrong. Right from there, everything goes haywire. Assuming 1.1m x 200 = 220m, you’ll need 8 rolls, plus one more to cover the remaining 20m. So you require 9 rolls. However, this is not the correct answer.

The correct answer to this question is 10 rolls. As 25m x 1.1m = 22.72, a roll of ribbon can only yield 22 ribbons. 198 ribbons divided by 9 equals 198 ribbons, so you actually need 10.

2. This question was from PSLE 2021.

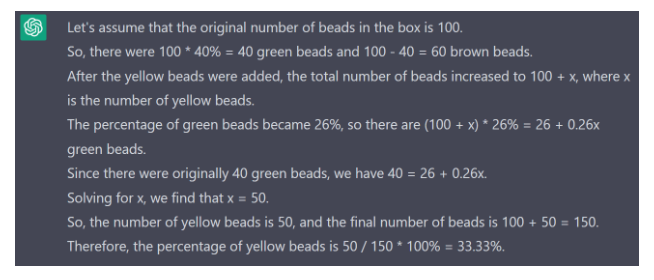

This was ChatGPT’s answer to the same question:

ChatGPT is wrong once again. Firstly, the chatbot has completely forgotten about the brown beads after the first two steps. The next step is to calculate the percentage of brown beads in the end by dividing the percentage of brown beads by the ratio of brown to green. In this case, you can just remember that 3 green beads equal 60% of the beads, rather than 100 beads.

Therefore:

26 x (3/2) = 39% brown beads at the end

100% – (39% brown bead +26% green beads) = 35% yellow beads.

So the correct answer is 35%.

These two examples amply demonstrate how ChatGPT has made fundamental errors leading to completely incorrect answers.

What Went Wrong With ChatGPT?

A couple of months after ChatGPT was launched, Open AI, its parent company, announced that it had upgraded the model with improved “factuality and mathematical capabilities.”

Business Insider has also asked the chatbot an identical set of questions, for which the bot gave the right answers. The AI-powered bot scored 21 for 100 for scientific papers, which is indeed an improvement in its overall performance. It also gave the right answers for two PSLE science questions that Business Insider had put forth.

However, ChatGPT was no match for PSLE Math. The chatbot can answer scientific questions by calling upon a massive wealth of data it has been trained on. But even for a chatbot with this intelligence, the use of abstract knowledge, Math logic, and rules does not come easily.

- The bot also required assistance to decipher questions with illustrations and graphs. ChatGPT’s score for such questions was a zero.

- It also made errors with text-based questions. When asked for the sum of 60,000, 5,000, 400, and 3, it answered 65,503. The correct answer is 65,403.

Also Read : A Detailed Overview of the PSLE Scoring System

The Strait Times also observed that ChatGPT faced certain challenges with the PSLE English paper, especially with sentences that had multiple meanings. This can be seen in ChatGPT’s /english/interpretation of the word ‘value’, which was interpreted as a monetary value rather than a moral one.

Hence it is evident that ChatGPT is not omnipotent. The manmade bot still has a long way to go before it can ace the PSLE like the Primary 6 students.

And the Winner is PSLE!

It is surprising that ChatGPT failed to ace PSLE when it effortlessly passed some of the toughest exams in the academic world. Despite the concerns raised by education ministries and universities regarding ChatGPT, one has to question the accuracy of Singapore PSLE exams. It appears that Singapore’s top primary school students can remain confident about their mathematical superiority, despite ChatGPT’s smart intelligence.